TOGETHER WITH

TL;DR

Traditional governance is built on quarterly meetings and static PDFs. AI moves too fast for "point-in-time" snapshots to matter.

Most organizations skip the "thinking work" and rush to technical controls. Governance fails when no one agrees on who owns the risk or what the business is actually trying to solve.

Visibility is more than just knowing which tool is used, but also knowing how it's used.

The real-time, high-intensity rigors we are building for AI governance are actually the blueprint for the future of all corporate risk management.

Governing the Ungovernable

Most AI governance conversations start in the wrong place.

These conversations often start with risk, with compliance checklists, and what you’re not supposed to do. And while that looks responsible on paper (and sounds right in an ivory tower), it almost never accounts for how people actually work and what they’re trying to accomplish. It also can’t account for the gap between what an organization is required to do and what it needs to do to move forward.

Instead, a more effective starting point is to look at the existing ways your teams are already experimenting with AI to solve real business problems. For example, start by reverse-engineering how your highest-performing teams use AI tools to drive efficiency or innovation, and use their workflows as a model for what an effective, secure deployment could look like. Focus first on enabling and scaling these productive patterns, rather than defaulting to prevention and restriction. By starting with what is actually working, you create a governance approach that aligns with real user behavior and amplifies positive outcomes, making incremental improvements as you learn.

In my experience, the result of most governance programs reads more like a legal disclaimer or a Terms of Service agreement that you definitely, totally read all the way through.

What we’re learning is that AI governance, much like using AI tools, is much more of a success formula than a rulebook, and results can vary wildly. A rulebook tells you where the boundaries are, but a success formula tells you how to stay within them while moving at the speed of modern business.

I find the AI Governance conversation to be a lot like the early days of the securing AI conversations, which were very tactical and very specific but often lacking any strategy at all. It’s a mad rush to the immediate technical concerns, which is understandable, but not always the best approach. In my 20+ years in this industry, I’ve learned that the tactical goes a whole lot better when you can first agree on the strategy.

You can’t know that at the time, of course. The entire cyber industry is built on being reactive, and old habits die hard. That’s why it’s so important to look back and reassess what you know now, and challenge what you thought you knew back then. That often comes out in the form of creating or adopting a strategy for a set of problems and opportunities.

That word, “Strategy,” can carry a lot with it, both good and bad. If done poorly, it leaves too much to interpretation and people in a flurry of busywork without knowing why they’re doing it.

I saw this clearly when the AI Security Shared Responsibility Model debate was happening. Dozens of technical frameworks emerged overnight, but nobody was doing the “tier zero” strategy and level-setting work. Instead, the industry jumped straight to: "Here is the exact set of controls you need.” Excellent! Yes, you need some of that, but before we reinvent the wheel, let's pause and strategically look at what we already have. Let’s see if there's a way to align existing practices with what this new technology demands.

The same thing is happening with AI governance, and it’s time we look back and do better. How do you govern the ungovernable?

To do that, we’ve got to talk about risk first with a capital “R”.

The Risk Management Challenge

Peter Bernstein opened Against the Gods: The Remarkable Story of Risk with a simple observation that, for most of human history, we haven’t managed “risk” at all. We chalked it up to gods, oracles, and fate.

The idea that the future could be understood, measured, and acted upon is relatively recent in human history. And even after centuries of developing the tools to measure and do it, humans are still really bad at it.

AI governance has the same problem, just one layer up.

The term “Risk Management” implies that you can identify and give shape to what you’re managing. With AI, most organizations can’t even answer the first question of, “What are people actually doing with it?” Not to mention that everyone is using personal accounts in addition to whatever they have access to at work. Teams are using tools the security team has never heard of.

The first step is really about visibility. You can’t manage what you can’t see, and you can’t govern what you don’t understand.

The traditional approach to AI governance treats AI as a one-dimensional construct. If you just block the “bad” stuff and allow the “good” stuff, then you’re golden, right? Well, not exactly. AI is built differently, and the risk isn't just in which tool someone uses, but in what they do with it. This means you need ways to uncover how AI is actually being used across your organization. This is where a vendor like Harmonic Security can fit into the strategy as a lens to help you understand employee behavior so you can implement governance based on reality rather than guesswork.

That discovery process can include deploying AI discovery tools, running audits to identify shadow IT and unsanctioned AI deployments, and working with business units to catalog the use cases already in flight. Reviewing network logs for new API traffic, looking at browser extensions, and surveying employees about their AI-driven workflows are also on the table. All of these steps can bring visibility, give you a foundation for informed governance, and help you turn strategy into action.

Humans have always been bad at estimating risk. We overweight immediate threats and underweight slow-moving ones. We confuse the absence of evidence with evidence of absence. We treat uncertainty as a reason to delay rather than a reason to act.

AI governance, left to those patterns, will lead to the same outcomes of being reactive, late, and organized around the last problem rather than the next one.

However, AI has some unique structural characteristics that make this all harder.

The Tier Zero Problem

A challenge I have not seen mentioned when it comes to AI, broadly speaking, is that it is not like other technology waves. AI is both a vertical and a horizontal wave at the same time. It touches every industry, every company, and every employee, from an intern to the founders of the company. It’s an unavoidable part of corporate (and much of personal) life.

That horizontal, all-disseminating aspect makes AI a lot like cybersecurity.

And what do we like to do in cyber? We like to scramble to the tactical work. We like specific controls, settings, and vendor-specific checklists. We love new acronyms and new frameworks even more. We sometimes like to work on these things more because they're immediate and tactical, and they give us a sense of control. We like to skip what I call the “Tier Zero” work.

Tier Zero is the strategic level-setting work that helps make everything else coherent. Tactics only succeed when you agree on the strategy first. Without it, everything is left to interpretation, and questions like:

What business problem are we solving?

Who owns this risk?

Who is accountable when something goes wrong?

How will we know when the work is finished?

What capabilities do we already have to support this?

What has to be true in order for this to work?

What would you say you “do here”?

OK, that last one was from Office Space

Half the battle on AI Governance is discovering what is true. What do we actually know? What can we prove? What is likely to become true? Most organizations skip this entirely and go straight to building frameworks on assumptions no one has tested.

The reason this matters so much right now is that horizontal framing we talked about. Every business has to care about cyber, and so does every person. Now the same is true for AI, right or wrong.

And if the history of how the security industry has handled horizontals is any indication of how the AI governance story might go, we have our work cut out for us.

We've got a rapidly changing technology and capabilities increasing on what feels like a daily basis. What's possible to do with AI right now is genuinely at the limit of your imagination. We've never seen a tectonic shift like this in modern history, and we cannot be passive participants.

Without that Tier Zero clarity, critical tasks like data governance and model integrity become nobody’s responsibility until something goes wrong. To prevent this, ownership of the Tier Zero strategy should be made explicit and assigned to a clear leader or team. In most organizations, this means placing accountability on a cross-functional group that brings together the CISO, technology leadership, legal, and business owners.

When everyone knows exactly who is responsible for setting the strategic foundation, it becomes much easier to drive action and accountability, and to ensure that governance is actually embedded into day-to-day operations. But once you have that alignment, you still have to turn that strategy into something your teams can actually read and follow. Harmonic’s AI Policy Studio is a great starting point for generating something that isn’t a 40-page legal disclaimer of all the things you’re not allowed to do.

What Can Governing Humans Tell Us?

Before getting too deep into how I think we should govern AI, we must also consider what it means to govern humans. The problems are more similar than we may realize.

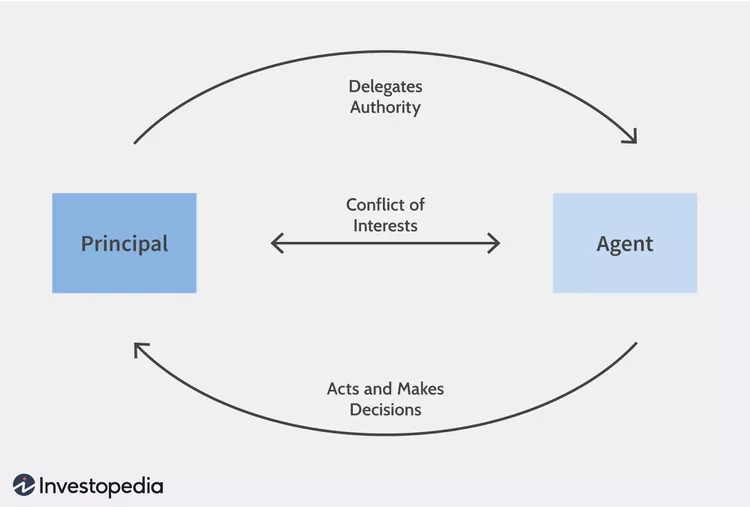

To do this, I want to borrow from the Principal-Agent Problem.

The Principal-Agent Problem is when there is a conflict in priorities between a person or group of people and the representative authorized to act on their behalf. This conflict typically happens when there is information asymmetry-when an Agent is better informed or has more knowledge than the Principal.

Sound familiar yet?

Without appropriate incentives, such as salary, bonuses, stock options, and other non-monetary benefits, the Agent may not act in the Principal's best interests, either intentionally or unintentionally.

Without the right visibility, instructions, guardrails, hard limits, and after-action reviews, an AI system may intentionally or unintentionally act against a company's best interests. That outcome has to be designed and baked into the system. It cannot be left to chance, to prompt engineering, or to the intentions of AI systems you have no control over.

The key piece here is the separation of "ownership" and "control." As companies give more tasks to AI across all departments, the "ownership" remains with the company, while the "control" slips further and further away. Companies delegate a degree of control, but will still be responsible for the outcomes.

Pretty soon, all you're doing is just "monitoring the situation," which is not the same as governing it.

Me monitoring my swarm of AI agents after I tell them to make me a $1 billion SaaS without making any mistakes.

So then, how do you address the Principal-Agent problem as it relates to AI?

This is where orchestration enters the picture, and the Principal-Agent problem is fundamentally an orchestration problem. Who coordinates the agents? Who sets the instructions? Who monitors outputs and intervenes when something drifts?

It's all about clarifying expectations: setting guardrails, applying security controls, using approved models, and monitoring the results. Active governance, not passive, and not just on paper. Orchestration is what makes policy operational.

So how do you do that?

Here is something most organizations skip entirely before writing a single policy: they don’t study how their people actually work.

Not how they're supposed to work, but how they “actually work.”

I’m talking about the tool preferences, the informal processes, the workarounds, and the “weird bugs.” The legacy system has been running for a decade because nobody wants to touch it. The information that flows through Slack instead of the ticketing system. The spreadsheet someone built in 2019 that three teams depend on, but no one knows why. Here is where we’ll see AI first applied, at the pain points and the chokepoints.

AI governance that ignores all of that doesn't govern anything and is just a fictional organization that exists only on an org chart.

The question isn't just "what risks does AI introduce?" It's "what is this organization already trying to become, and how does AI fit into that trajectory?" Governance should work with the grain of how people operate, not against it.

Think of a keypad lock where two or three buttons have worn paint, and the others look brand new. Nobody told you the combination, but it’s pretty obvious when you look at it. The worn keys are the informal processes, but they are somehow load-bearing nonetheless. A governance framework that ignores the worn keys and only works with the pristine ones isn't governing anything.

Build the AI governance around reality, not what you wish would happen.

The End of Static Governance

What got your governance program here won’t get it there when it comes to governing AI.

You can’t apply static thinking to systems that change continuously. Traditional governance assumes that once something is launched, the hard work is done. You write the policy, you set the controls, you schedule the quarterly review, and you get all the nods from all the stakeholders. You rinse and repeat with very little iteration or change.

But AI systems don’t work that way, and neither do the people in your business using them.

They adapt based on data and context, and they don’t exhibit deterministic behavior. And most importantly, the way people use AI will change faster than any committee that meets once a month can possibly track.

If you’re approaching AI governance with a 2010s mindset, you’ve already lost. We have spent decades treating governance as a series of bureaucratic hurdles with steering committee meetings that happen once a quarter, static frameworks that sit in PDFs, and point-in-time snapshots that are obsolete by the time you see them.

When it comes to AI, that legacy model fails in a potentially dangerous way.

Governing AI requires an intensity of focus and a "security lens" unlike anything we’ve ever managed. It is too multifaceted and shifts too fast for a checklist to capture. But there is a massive paradox at the heart of this challenge, in that the only way to govern these models is to lean directly into them.

You cannot co-shape or hope to govern a future you are afraid to touch and learn about.

To even have a chance at oversight, you must embrace a model of co-governance in which we operate alongside the technology in real time.

This isn't about Sci-Fi scenarios or "Terminator" style doomsday stories. This is about the real tension happening right now in every company, with the immense pressure to adopt AI clashing with the systemic fear of getting it wrong.

This fear isn't just about the technology, but more about internal corporate structures and institutional inertia. The larger the firm, the more siloed, political, and territorial it is by nature. Now, add onto this the inherently complex, cross-silo nature of cybersecurity, and AI risk comes into direct conflict with those dynamics.

If corporate governance structures aren't designed to span these territories, very little gets delivered in terms of transformative effort. You end up stuck with low-hanging fruit or "quick wins" that don't actually move the needle on safety.

Traditional governance models, with their committees, static meetings, and PDFs, reinforce these silos. Everyone stays in their lane, waiting for their turn to say "no" or “nothing from my end, thanks.”

The answer isn’t to give up on governance, but to govern possibilities, as well as behaviors.

What Good Looks Like

AI governance is the biggest opportunity security leaders have had in years to earn a real seat at the table. Not as the team that says no, but as the team that makes responsible AI adoption actually work.

If you want to co-shape the future, you have to be close to the tech that’s doing the shaping. And once you're that close, you realize the tool you were afraid of is actually the only thing capable of fixing the broken, manual oversight of the past.

Learning how to govern AI is about building a governance engine that finally keeps pace with modern business. Good AI governance does three things:

It meets the organization where it actually is, not where a framework assumes it should be. It starts with what already exists before it builds anything new.

It gives anyone in the organization a clear, repeatable path to use AI well, not just the people who already know how.

It orchestrates across the organization rather than governing from within a single silo.

Mostly, good AI governance using the scaffolding that is already there, and AI to amplify the impact. What you can get with that is a workforce that can execute consistently and confidently with AI, regardless of their starting point.

Here's a spoiler alert most organizations are missing: the rigors we are being forced to develop for governing AI shouldn't be an "AI Only" thing. The evolution of AI governance is actually the blueprint for the evolution of governance as a whole.

The goal isn't a governance framework that covers every scenario. It's one that anyone can use and keeps pace with the technology it’s trying to govern.

What got you here won’t take you where you want to go, but this is a start.

Thank you for reading! If you liked this analysis, please share it with your friends, colleagues, and anyone interested in the cybersecurity market.